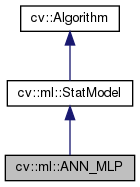

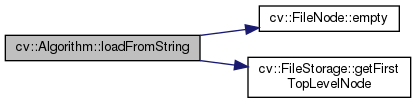

Artificial Neural Networks - Multi-Layer Perceptrons. More...

#include <opencv2/ml.hpp>

Public Types | |

| enum | ActivationFunctions { IDENTITY = 0, SIGMOID_SYM = 1, GAUSSIAN = 2, RELU = 3, LEAKYRELU = 4 } |

| possible activation functions More... | |

| enum | Flags { UPDATE_MODEL = 1, RAW_OUTPUT =1, COMPRESSED_INPUT =2, PREPROCESSED_INPUT =4 } |

| Predict options. More... | |

| enum | TrainFlags { UPDATE_WEIGHTS = 1, NO_INPUT_SCALE = 2, NO_OUTPUT_SCALE = 4 } |

| Train options. More... | |

| enum | TrainingMethods { BACKPROP =0, RPROP = 1, ANNEAL = 2 } |

| Available training methods. More... | |

Public Member Functions | |

| virtual float | calcError (const Ptr< TrainData > &data, bool test, OutputArray resp) const |

| Computes error on the training or test dataset. More... | |

| virtual void | clear () |

| Clears the algorithm state. More... | |

| virtual bool | empty () const CV_OVERRIDE |

| Returns true if the Algorithm is empty (e.g. More... | |

| virtual double | getAnnealCoolingRatio () const =0 |

| ANNEAL: Update cooling ratio. More... | |

| virtual double | getAnnealFinalT () const =0 |

| ANNEAL: Update final temperature. More... | |

| virtual double | getAnnealInitialT () const =0 |

| ANNEAL: Update initial temperature. More... | |

| virtual int | getAnnealItePerStep () const =0 |

| ANNEAL: Update iteration per step. More... | |

| virtual double | getBackpropMomentumScale () const =0 |

| BPROP: Strength of the momentum term (the difference between weights on the 2 previous iterations). More... | |

| virtual double | getBackpropWeightScale () const =0 |

| BPROP: Strength of the weight gradient term. More... | |

| virtual String | getDefaultName () const |

| Returns the algorithm string identifier. More... | |

| virtual cv::Mat | getLayerSizes () const =0 |

| Integer vector specifying the number of neurons in each layer including the input and output layers. More... | |

| virtual double | getRpropDW0 () const =0 |

| RPROP: Initial value \(\Delta_0\) of update-values \(\Delta_{ij}\). More... | |

| virtual double | getRpropDWMax () const =0 |

| RPROP: Update-values upper limit \(\Delta_{max}\). More... | |

| virtual double | getRpropDWMin () const =0 |

| RPROP: Update-values lower limit \(\Delta_{min}\). More... | |

| virtual double | getRpropDWMinus () const =0 |

| RPROP: Decrease factor \(\eta^-\). More... | |

| virtual double | getRpropDWPlus () const =0 |

| RPROP: Increase factor \(\eta^+\). More... | |

| virtual TermCriteria | getTermCriteria () const =0 |

| Termination criteria of the training algorithm. More... | |

| virtual int | getTrainMethod () const =0 |

| Returns current training method. More... | |

| virtual int | getVarCount () const =0 |

| Returns the number of variables in training samples. More... | |

| virtual Mat | getWeights (int layerIdx) const =0 |

| virtual bool | isClassifier () const =0 |

| Returns true if the model is classifier. More... | |

| virtual bool | isTrained () const =0 |

| Returns true if the model is trained. More... | |

| virtual float | predict (InputArray samples, OutputArray results=noArray(), int flags=0) const =0 |

| Predicts response(s) for the provided sample(s) More... | |

| virtual void | read (const FileNode &fn) |

| Reads algorithm parameters from a file storage. More... | |

| virtual void | save (const String &filename) const |

| Saves the algorithm to a file. More... | |

| virtual void | setActivationFunction (int type, double param1=0, double param2=0)=0 |

| Initialize the activation function for each neuron. More... | |

| virtual void | setAnnealCoolingRatio (double val)=0 |

| ANNEAL: Update cooling ratio. More... | |

| virtual void | setAnnealEnergyRNG (const RNG &rng)=0 |

| Set/initialize anneal RNG. More... | |

| virtual void | setAnnealFinalT (double val)=0 |

| ANNEAL: Update final temperature. More... | |

| virtual void | setAnnealInitialT (double val)=0 |

| ANNEAL: Update initial temperature. More... | |

| virtual void | setAnnealItePerStep (int val)=0 |

| ANNEAL: Update iteration per step. More... | |

| virtual void | setBackpropMomentumScale (double val)=0 |

| BPROP: Strength of the momentum term (the difference between weights on the 2 previous iterations). More... | |

| virtual void | setBackpropWeightScale (double val)=0 |

| BPROP: Strength of the weight gradient term. More... | |

| virtual void | setLayerSizes (InputArray _layer_sizes)=0 |

| Integer vector specifying the number of neurons in each layer including the input and output layers. More... | |

| virtual void | setRpropDW0 (double val)=0 |

| RPROP: Initial value \(\Delta_0\) of update-values \(\Delta_{ij}\). More... | |

| virtual void | setRpropDWMax (double val)=0 |

| RPROP: Update-values upper limit \(\Delta_{max}\). More... | |

| virtual void | setRpropDWMin (double val)=0 |

| RPROP: Update-values lower limit \(\Delta_{min}\). More... | |

| virtual void | setRpropDWMinus (double val)=0 |

| RPROP: Decrease factor \(\eta^-\). More... | |

| virtual void | setRpropDWPlus (double val)=0 |

| RPROP: Increase factor \(\eta^+\). More... | |

| virtual void | setTermCriteria (TermCriteria val)=0 |

| Termination criteria of the training algorithm. More... | |

| virtual void | setTrainMethod (int method, double param1=0, double param2=0)=0 |

| Sets training method and common parameters. More... | |

| virtual bool | train (const Ptr< TrainData > &trainData, int flags=0) |

| Trains the statistical model. More... | |

| virtual bool | train (InputArray samples, int layout, InputArray responses) |

| Trains the statistical model. More... | |

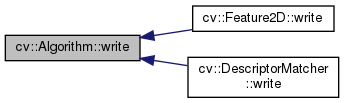

| virtual void | write (FileStorage &fs) const |

| Stores algorithm parameters in a file storage. More... | |

| void | write (const Ptr< FileStorage > &fs, const String &name=String()) const |

| simplified API for language bindings This is an overloaded member function, provided for convenience. It differs from the above function only in what argument(s) it accepts. More... | |

Static Public Member Functions | |

| static Ptr< ANN_MLP > | create () |

| Creates empty model. More... | |

| static Ptr< ANN_MLP > | load (const String &filepath) |

| Loads and creates a serialized ANN from a file. More... | |

| template<typename _Tp > | |

| static Ptr< _Tp > | load (const String &filename, const String &objname=String()) |

| Loads algorithm from the file. More... | |

| template<typename _Tp > | |

| static Ptr< _Tp > | loadFromString (const String &strModel, const String &objname=String()) |

| Loads algorithm from a String. More... | |

| template<typename _Tp > | |

| static Ptr< _Tp > | read (const FileNode &fn) |

| Reads algorithm from the file node. More... | |

| template<typename _Tp > | |

| static Ptr< _Tp > | train (const Ptr< TrainData > &data, int flags=0) |

| Create and train model with default parameters. More... | |

Protected Member Functions | |

| void | writeFormat (FileStorage &fs) const |

Artificial Neural Networks - Multi-Layer Perceptrons.

Unlike many other models in ML that are constructed and trained at once, in the MLP model these steps are separated. First, a network with the specified topology is created using the non-default constructor or the method ANN_MLP::create. All the weights are set to zeros. Then, the network is trained using a set of input and output vectors. The training procedure can be repeated more than once, that is, the weights can be adjusted based on the new training data.

Additional flags for StatModel::train are available: ANN_MLP::TrainFlags.

possible activation functions

|

inherited |

Train options.

|

virtualinherited |

Computes error on the training or test dataset.

| data | the training data |

| test | if true, the error is computed over the test subset of the data, otherwise it's computed over the training subset of the data. Please note that if you loaded a completely different dataset to evaluate already trained classifier, you will probably want not to set the test subset at all with TrainData::setTrainTestSplitRatio and specify test=false, so that the error is computed for the whole new set. Yes, this sounds a bit confusing. |

| resp | the optional output responses. |

The method uses StatModel::predict to compute the error. For regression models the error is computed as RMS, for classifiers - as a percent of missclassified samples (0%-100%).

|

inlinevirtualinherited |

Clears the algorithm state.

Reimplemented in cv::FlannBasedMatcher, and cv::DescriptorMatcher.

Creates empty model.

Use StatModel::train to train the model, Algorithm::load<ANN_MLP>(filename) to load the pre-trained model. Note that the train method has optional flags: ANN_MLP::TrainFlags.

|

virtualinherited |

Returns true if the Algorithm is empty (e.g.

in the very beginning or after unsuccessful read

Reimplemented from cv::Algorithm.

|

pure virtual |

ANNEAL: Update cooling ratio.

It must be >0 and less than 1. Default value is 0.95.

|

pure virtual |

ANNEAL: Update final temperature.

It must be >=0 and less than initialT. Default value is 0.1.

|

pure virtual |

|

pure virtual |

|

pure virtual |

BPROP: Strength of the momentum term (the difference between weights on the 2 previous iterations).

This parameter provides some inertia to smooth the random fluctuations of the weights. It can vary from 0 (the feature is disabled) to 1 and beyond. The value 0.1 or so is good enough. Default value is 0.1.

|

pure virtual |

BPROP: Strength of the weight gradient term.

The recommended value is about 0.1. Default value is 0.1.

|

virtualinherited |

Returns the algorithm string identifier.

This string is used as top level xml/yml node tag when the object is saved to a file or string.

Reimplemented in cv::AKAZE, cv::KAZE, cv::SimpleBlobDetector, cv::GFTTDetector, cv::AgastFeatureDetector, cv::FastFeatureDetector, cv::MSER, cv::ORB, cv::BRISK, and cv::Feature2D.

|

pure virtual |

Integer vector specifying the number of neurons in each layer including the input and output layers.

The very first element specifies the number of elements in the input layer. The last element - number of elements in the output layer.

|

pure virtual |

RPROP: Initial value \(\Delta_0\) of update-values \(\Delta_{ij}\).

Default value is 0.1.

|

pure virtual |

RPROP: Update-values upper limit \(\Delta_{max}\).

It must be >1. Default value is 50.

|

pure virtual |

RPROP: Update-values lower limit \(\Delta_{min}\).

It must be positive. Default value is FLT_EPSILON.

|

pure virtual |

|

pure virtual |

|

pure virtual |

Termination criteria of the training algorithm.

You can specify the maximum number of iterations (maxCount) and/or how much the error could change between the iterations to make the algorithm continue (epsilon). Default value is TermCriteria(TermCriteria::MAX_ITER + TermCriteria::EPS, 1000, 0.01).

|

pure virtual |

Returns current training method.

|

pure virtualinherited |

Returns the number of variables in training samples.

|

pure virtual |

|

pure virtualinherited |

Returns true if the model is classifier.

|

pure virtualinherited |

Returns true if the model is trained.

Loads and creates a serialized ANN from a file.

Use ANN::save to serialize and store an ANN to disk. Load the ANN from this file again, by calling this function with the path to the file.

| filepath | path to serialized ANN |

|

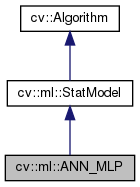

inlinestaticinherited |

Loads algorithm from the file.

| filename | Name of the file to read. |

| objname | The optional name of the node to read (if empty, the first top-level node will be used) |

This is static template method of Algorithm. It's usage is following (in the case of SVM):

In order to make this method work, the derived class must overwrite Algorithm::read(const FileNode& fn).

References CV_Assert, cv::FileNode::empty(), cv::FileStorage::getFirstTopLevelNode(), cv::FileStorage::isOpened(), and cv::FileStorage::READ.

|

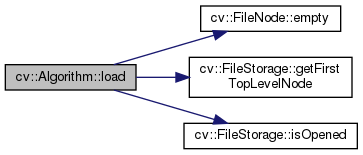

inlinestaticinherited |

Loads algorithm from a String.

| strModel | The string variable containing the model you want to load. |

| objname | The optional name of the node to read (if empty, the first top-level node will be used) |

This is static template method of Algorithm. It's usage is following (in the case of SVM):

References CV_WRAP, cv::FileNode::empty(), cv::FileStorage::getFirstTopLevelNode(), cv::FileStorage::MEMORY, and cv::FileStorage::READ.

|

pure virtualinherited |

Predicts response(s) for the provided sample(s)

| samples | The input samples, floating-point matrix |

| results | The optional output matrix of results. |

| flags | The optional flags, model-dependent. See cv::ml::StatModel::Flags. |

Implemented in cv::ml::LogisticRegression, and cv::ml::EM.

|

inlinevirtualinherited |

Reads algorithm parameters from a file storage.

Reimplemented in cv::FlannBasedMatcher, cv::DescriptorMatcher, and cv::Feature2D.

|

inlinestaticinherited |

Reads algorithm from the file node.

This is static template method of Algorithm. It's usage is following (in the case of SVM):

In order to make this method work, the derived class must overwrite Algorithm::read(const FileNode& fn) and also have static create() method without parameters (or with all the optional parameters)

|

virtualinherited |

Saves the algorithm to a file.

In order to make this method work, the derived class must implement Algorithm::write(FileStorage& fs).

|

pure virtual |

Initialize the activation function for each neuron.

Currently the default and the only fully supported activation function is ANN_MLP::SIGMOID_SYM.

| type | The type of activation function. See ANN_MLP::ActivationFunctions. |

| param1 | The first parameter of the activation function, \(\alpha\). Default value is 0. |

| param2 | The second parameter of the activation function, \(\beta\). Default value is 0. |

|

pure virtual |

ANNEAL: Update cooling ratio.

|

pure virtual |

Set/initialize anneal RNG.

|

pure virtual |

ANNEAL: Update final temperature.

|

pure virtual |

ANNEAL: Update initial temperature.

|

pure virtual |

ANNEAL: Update iteration per step.

|

pure virtual |

BPROP: Strength of the momentum term (the difference between weights on the 2 previous iterations).

|

pure virtual |

BPROP: Strength of the weight gradient term.

|

pure virtual |

Integer vector specifying the number of neurons in each layer including the input and output layers.

The very first element specifies the number of elements in the input layer. The last element - number of elements in the output layer. Default value is empty Mat.

|

pure virtual |

RPROP: Initial value \(\Delta_0\) of update-values \(\Delta_{ij}\).

|

pure virtual |

RPROP: Update-values upper limit \(\Delta_{max}\).

|

pure virtual |

RPROP: Update-values lower limit \(\Delta_{min}\).

|

pure virtual |

RPROP: Decrease factor \(\eta^-\).

|

pure virtual |

RPROP: Increase factor \(\eta^+\).

|

pure virtual |

Termination criteria of the training algorithm.

|

pure virtual |

Sets training method and common parameters.

| method | Default value is ANN_MLP::RPROP. See ANN_MLP::TrainingMethods. |

| param1 | passed to setRpropDW0 for ANN_MLP::RPROP and to setBackpropWeightScale for ANN_MLP::BACKPROP and to initialT for ANN_MLP::ANNEAL. |

| param2 | passed to setRpropDWMin for ANN_MLP::RPROP and to setBackpropMomentumScale for ANN_MLP::BACKPROP and to finalT for ANN_MLP::ANNEAL. |

|

virtualinherited |

Trains the statistical model.

| trainData | training data that can be loaded from file using TrainData::loadFromCSV or created with TrainData::create. |

| flags | optional flags, depending on the model. Some of the models can be updated with the new training samples, not completely overwritten (such as NormalBayesClassifier or ANN_MLP). |

|

virtualinherited |

Trains the statistical model.

| samples | training samples |

| layout | See ml::SampleTypes. |

| responses | vector of responses associated with the training samples. |

|

inlinestaticinherited |

Create and train model with default parameters.

The class must implement static create() method with no parameters or with all default parameter values

|

inlinevirtualinherited |

Stores algorithm parameters in a file storage.

Reimplemented in cv::FlannBasedMatcher, cv::DescriptorMatcher, and cv::Feature2D.

References CV_WRAP.

Referenced by cv::Feature2D::write(), and cv::DescriptorMatcher::write().

|

inherited |

simplified API for language bindings This is an overloaded member function, provided for convenience. It differs from the above function only in what argument(s) it accepts.

|

protectedinherited |