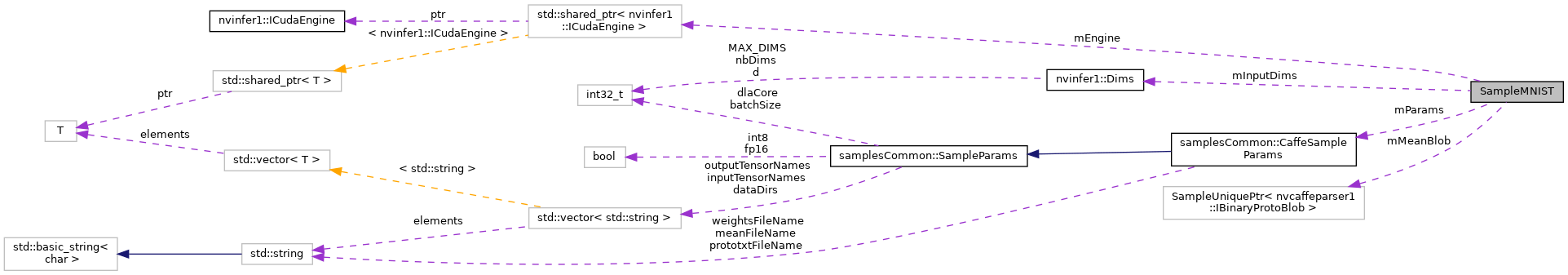

The SampleMNIST class implements the MNIST sample. More...

Public Member Functions | |

| SampleMNIST (const samplesCommon::CaffeSampleParams ¶ms) | |

| bool | build () |

| Builds the network engine. More... | |

| bool | infer () |

| Runs the TensorRT inference engine for this sample. More... | |

| bool | teardown () |

| Used to clean up any state created in the sample class. More... | |

Private Types | |

| template<typename T > | |

| using | SampleUniquePtr = std::unique_ptr< T, samplesCommon::InferDeleter > |

Private Member Functions | |

| bool | constructNetwork (SampleUniquePtr< nvcaffeparser1::ICaffeParser > &parser, SampleUniquePtr< nvinfer1::INetworkDefinition > &network) |

| uses a Caffe parser to create the MNIST Network and marks the output layers More... | |

| bool | processInput (const samplesCommon::BufferManager &buffers, const std::string &inputTensorName, int inputFileIdx) const |

| Reads the input and mean data, preprocesses, and stores the result in a managed buffer. More... | |

| bool | verifyOutput (const samplesCommon::BufferManager &buffers, const std::string &outputTensorName, int groundTruthDigit) const |

| Verifies that the output is correct and prints it. More... | |

Private Attributes | |

| std::shared_ptr< nvinfer1::ICudaEngine > | mEngine {nullptr} |

| The TensorRT engine used to run the network. More... | |

| samplesCommon::CaffeSampleParams | mParams |

| The parameters for the sample. More... | |

| nvinfer1::Dims | mInputDims |

| The dimensions of the input to the network. More... | |

| SampleUniquePtr< nvcaffeparser1::IBinaryProtoBlob > | mMeanBlob |

The SampleMNIST class implements the MNIST sample.

It creates the network using a trained Caffe MNIST classification model

|

private |

|

inline |

| bool SampleMNIST::build | ( | ) |

Builds the network engine.

Creates the network, configures the builder and creates the network engine.

This function creates the MNIST network by parsing the caffe model and builds the engine that will be used to run MNIST (mEngine)

| bool SampleMNIST::infer | ( | ) |

Runs the TensorRT inference engine for this sample.

This function is the main execution function of the sample. It allocates the buffer, sets inputs, executes the engine, and verifies the output.

| bool SampleMNIST::teardown | ( | ) |

Used to clean up any state created in the sample class.

Clean up the libprotobuf files as the parsing is complete

|

private |

uses a Caffe parser to create the MNIST Network and marks the output layers

Uses a caffe parser to create the MNIST Network and marks the output layers.

| network | Pointer to the network that will be populated with the MNIST network |

| builder | Pointer to the engine builder |

|

private |

Reads the input and mean data, preprocesses, and stores the result in a managed buffer.

|

private |

Verifies that the output is correct and prints it.

|

private |

The TensorRT engine used to run the network.

|

private |

The parameters for the sample.

|

private |

The dimensions of the input to the network.

|

private |